The web scraper is an lxml web scraper which relies on Python lxml library with XPath to scrape off HTML data fast and precisely. It is based on the etree module and an efficient html parser thus it is quicker and more dependable than most of the options in structured data extraction and pagination management.

Table of Contents

- What is lxml web scraping?

- Key Definitions

- What is the way to use lxml and XPath to scrape a website?

- How do I handle pagination?

- Does lxml have a faster speed than other parsers?

- Scraping for sustainability.

- Common mistakes + fixes

- Comparison table

- FAQ

- Summary

What is lxml web scraping?

Lxml web scraping involves scraping information on websites with lxml library and XPath expressions in python. It is a combination of high-performance html-parser and accurate element selection.

It finds broad applications in the USA regarding the collection of data, monitoring of SEO and automation of research.

Key Definitions

- lxml: A python library to work with XML and HTML in an efficient manner.

- XPath: This is a query language that is used to navigate the content of HTML/XML documents and select elements.

- HTML parser: This is software that transforms raw HTML data to organized data.

- etree: The lxml module of HTML/XML tree parsing/traversal.

- Pagination: It is the process of programmatically moving through more than one page of content.

- Performance: The speed and efficiency of the extraction of data.

What is the way to use lxml and XPath to scrape a website?

The application of lxml web scraping requires the installation of dependencies and extraction of elements using XPath.

Step 1: Install libraries

pip install lxml requestsStep 2: Fetch and parse HTML

import requests

from lxml import etree

url = "https://example.com"

response = requests.get(url)

parser = etree.HTMLParser()

tree = etree.fromstring(response.content, parser)Step 3: Retrieve data through XPath.

titles = tree.xpath("//h2/text()")

for title in titles:

print(title.strip())

Why use XPath?

- Precise element targeting

- Attributes, hierarchy, conditions are supported.

- Quicker than regular expression parsing.

How do I handle pagination?

Pagination refers to loading several pages in automatic mode.

Example:

for page in range(1, 6):

url = f"https://example.com/products?page={page}"

response = requests.get(url)

tree = etree.HTML(response.content)

products = tree.xpath("//div[@class='product']/h3/text()")

print(products)Tips:

- Search with patterns such as, ?page= or /page/2/

- Break when no results are obtained.

- Add delays between requests

Does lxml have a faster speed than other parsers?

Yes. lxml is more tolerable than BeautifulSoup due to its utilization of C libraries. It also provides increased scraping capability on massive scale.

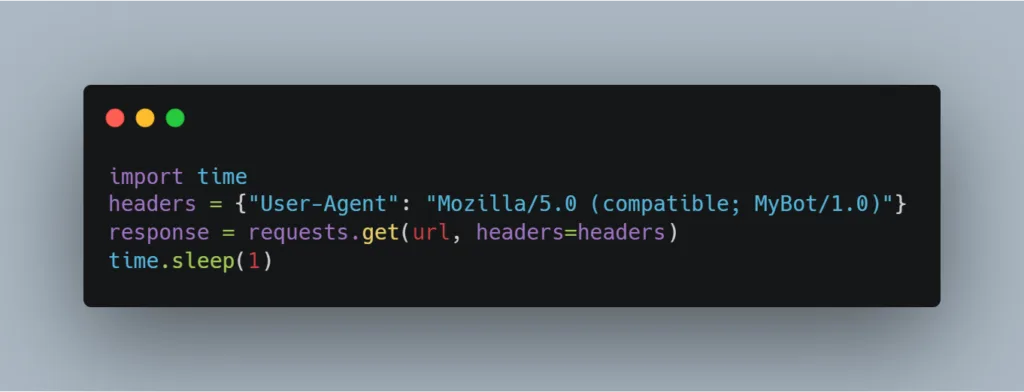

Scraping for sustainability.

Scraping practices are also responsible.

Scrape safely and legally:

- Check robots.txt (example.com/robots.txt)

- Terms of Service Respect site.

- Use rate limiting (time.sleep(1))

- Scraping personal/ private data should be avoided.

- Identify your User-Agent

Example:

import time

headers = {"User-Agent": "Mozilla/5.0 (compatible; MyBot/1.0)"}

response = requests.get(url, headers=headers)

time.sleep(1)

Common Mistakes + Fixes

| Mistake | Fix |

| XPath mistake | Inspect HTML with browser DevTools. |

| Pagination ignored | Triumph over pages. |

| Empty results received | Check dynamic JS content. |

| Blocking | Add delay + headers. |

| Encoding errors | Response.content is used. |

Comparison Table

| Feature | lxml | BeautifulSoup |

| Speed | Very High | Moderate |

| XPath Support | Yes | No (CSS only) |

| Memory Usage | Low | Higher |

| Performance | Optimized C backend | Pure Python. |

| Learning Curve | Moderate | Easy. |

FAQ

1.Why do we use lxml in Python?

It is an efficient HTML/XML parser and extraction of structured data.

2.Is Xpath superior to CSS selectors?

XPath is better and more adaptable in complicated queries.

3.Is it possible to scrape JavaScript sites using lxml?

Not directly. JS-rendered pages Use Selenium or Playwright.

4.What is the way to install lxml on Windows?

Use pip install lxml. Ready-made wheels are on offer.

5.My XPath is not bringing results?

The element can either be loaded dynamically or not directed appropriately.

6.Is scraping of websites legal in USA?

Public data scraping is usually not illegal however, you should never ignore ToS and robots.txt.

7.What can I do to enhance the scraping?

Use statement, query restrictions and optimization of XPath.

8.Can I use lxml for XML files?

Yes it supports both XML and HTML.

Compact Glossary

- DOM: Hierarchical rendering of HTML.

- Selector: Process of getting elements.

- Rate limiting: limiting the frequency of request.

- User-Agent: This is the identifier that is sent as part of the HTTP requests.

Beginner Checklist

- Install lxml and requests

- Inspect page HTML

- Write correct XPath

- Test on one page first

- Add pagination loop

- Implement rate limiting

- Check robots.txt

- Store data safely

Summary

XPath-based lxml web scraping is fast, accurate and reliable to the beginner. With etree, configuration of html parsing and safe pagination, you can create fast responsible scraping scripts that can work in real-world projects in the USA and other places as well.

Leave a Reply